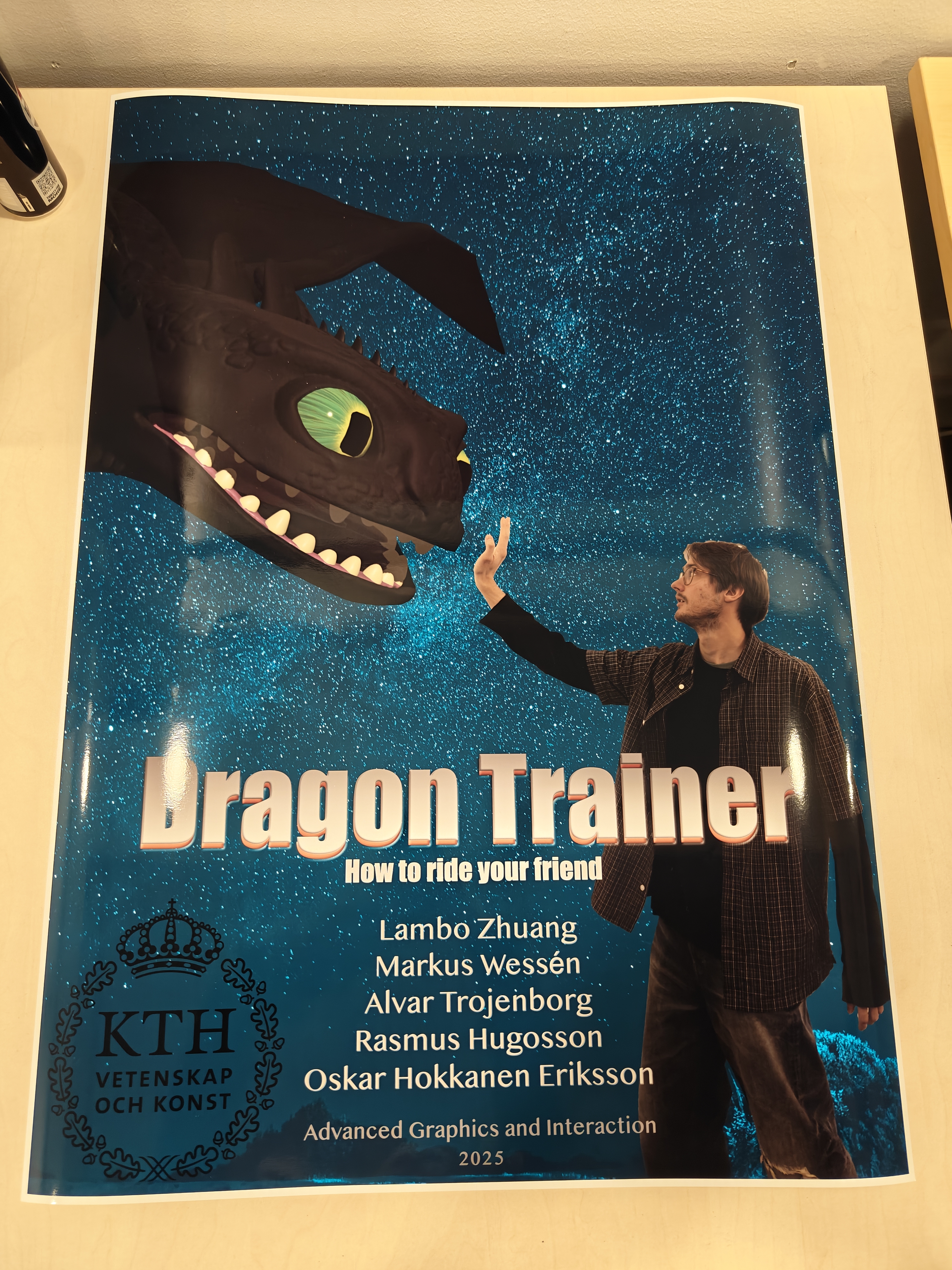

Dragon Trainer

A cooperative VR experience where two players team up as a dragon and its rider to explore a procedurally generated landscape. Heavily inspired by How to Train Your Dragon — just like Hiccup and Toothless, neither player can fly without the other. Developed for the DH2413 Advanced Graphics and Interaction course at KTH Royal Institute of Technology.

Demo

What It Is

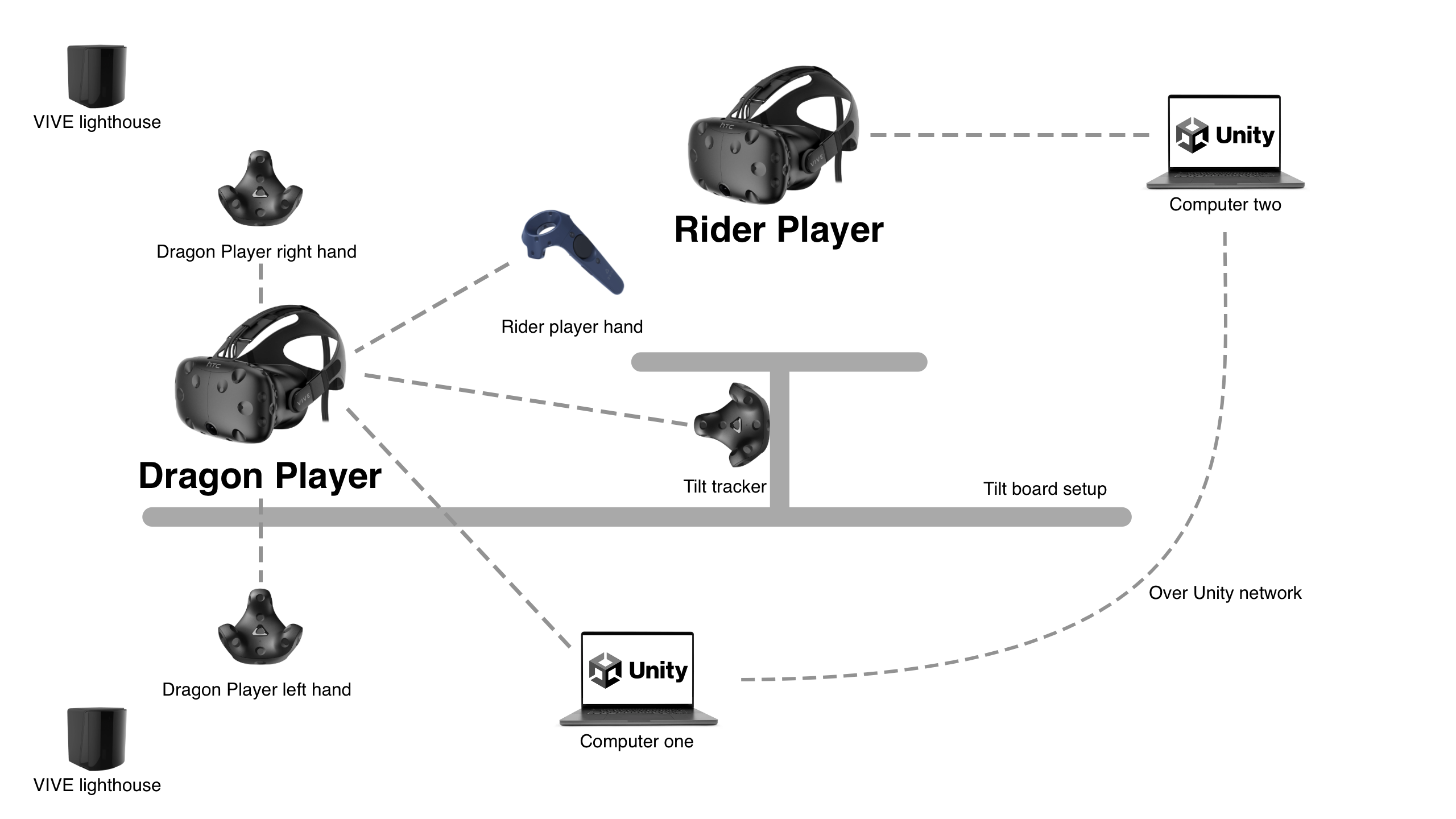

Dragon Trainer is a cooperative VR experience built around full-body, embodied interaction. One player is the dragon, one is the rider. Neither can fly well without the other. The dragon player flaps their arms (tracked via HTC VIVE trackers) to control speed and opens their mouth to breathe fire. The rider adjusts pitch with a controller. Both players share roll control by physically leaning together on a custom-built tilting platform.

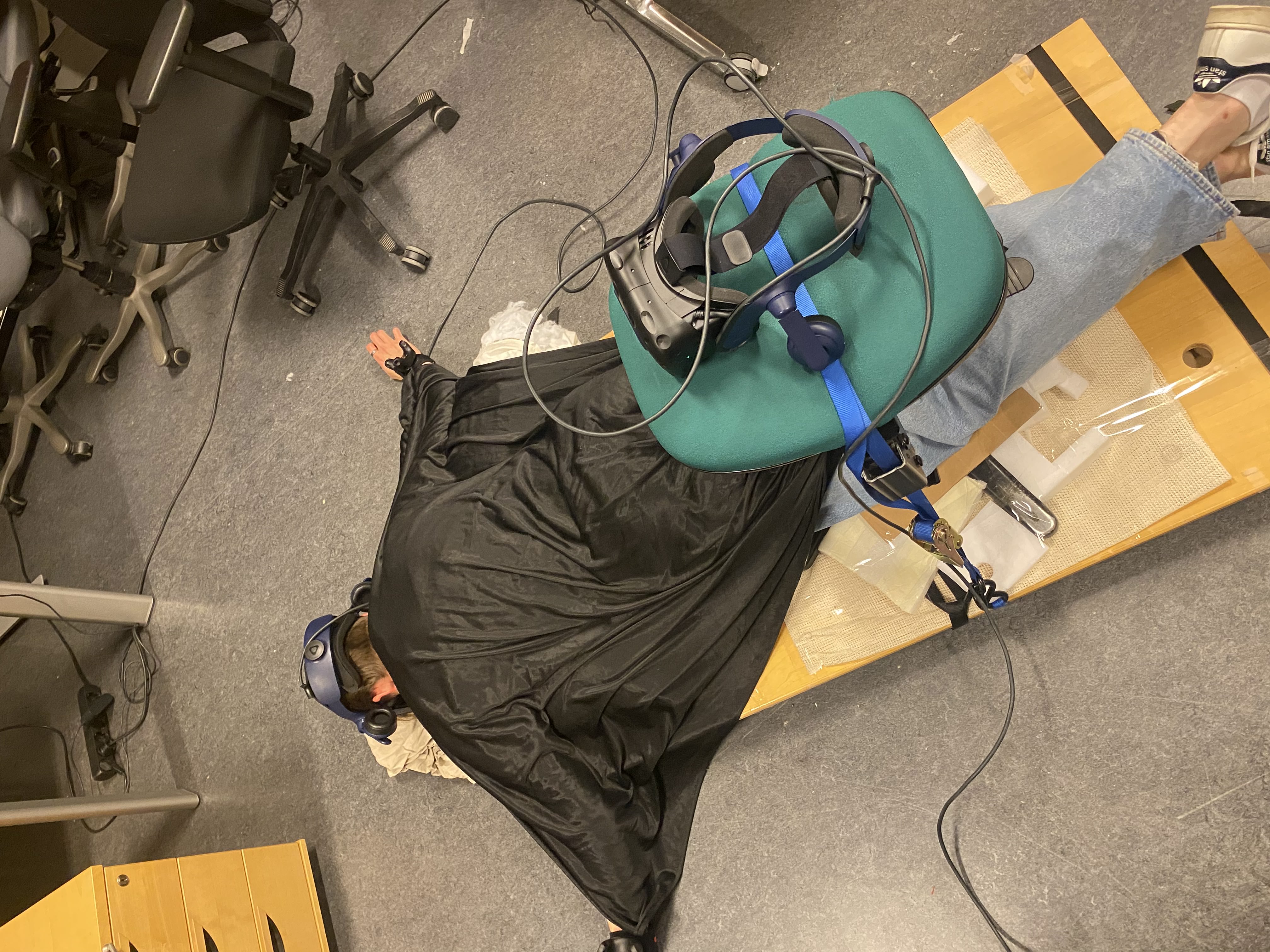

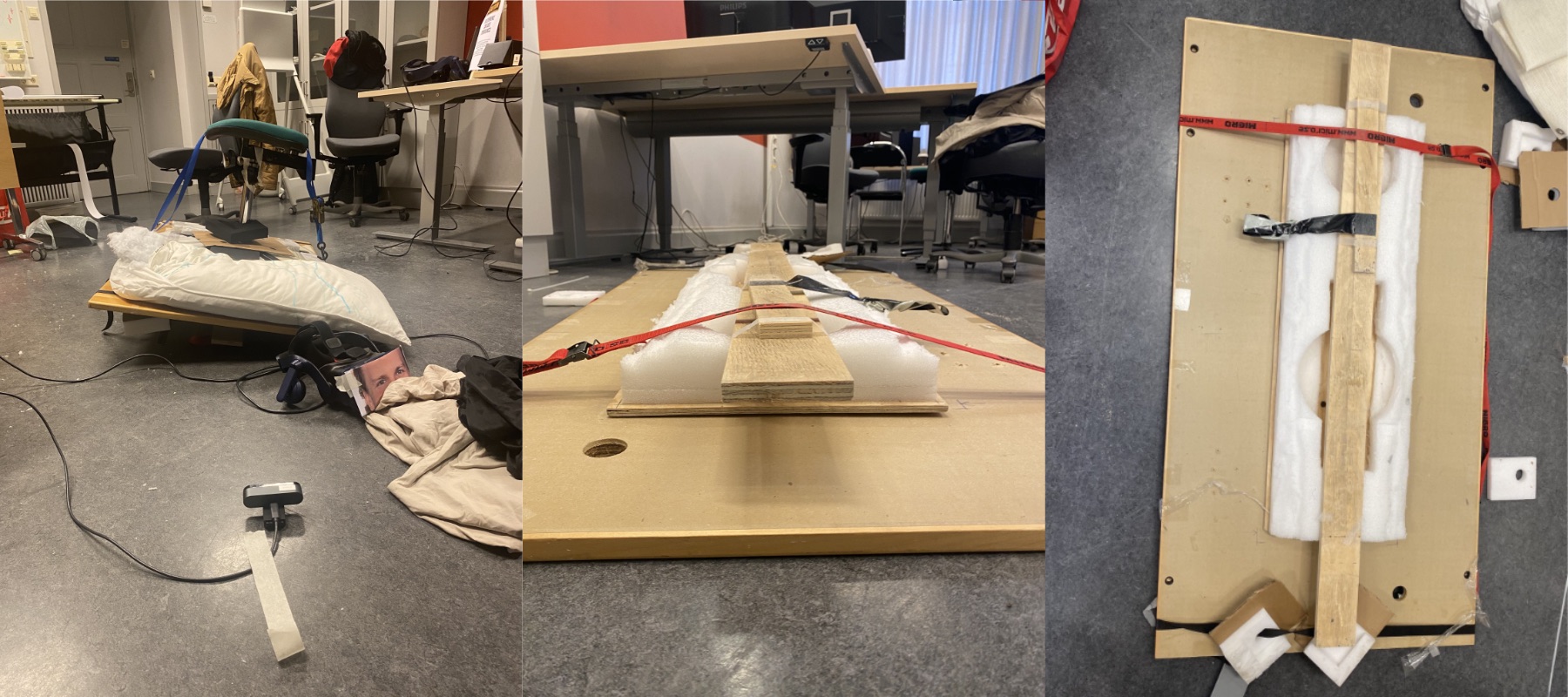

The Physical Platform

The platform is a key part of the experience. Inspired by Birdly, a full-body VR flight simulator, it consists of a board mounted on an inflatable mattress, allowing both players to tilt left and right together to steer the dragon’s roll. Early design discussions explored having players dangle their feet for immersion, but the team determined that mounting players on a moving board while wearing a VR headset carried too much risk. The tilting approach was also hypothesized to reduce cybersickness: when the physical body tilts in sync with the virtual world, the disconnect between vestibular and visual sensations that causes nausea is reduced.

The Game World

The world is infinite and procedurally generated. As players fly, new terrain chunks are spawned ahead using Perlin Noise sampling, with distance fog concealing the boundaries. Rings are placed automatically in the direction of travel for players to fly through, and scattered sheep herds roam the landscape, targetable with fire. Hitting a sheep with fire triggers a dissolve shader effect, gradually removing it from the scene.

What I Did

As part of a five-person team, my work covered flight mechanics, networking, and hardware integration:

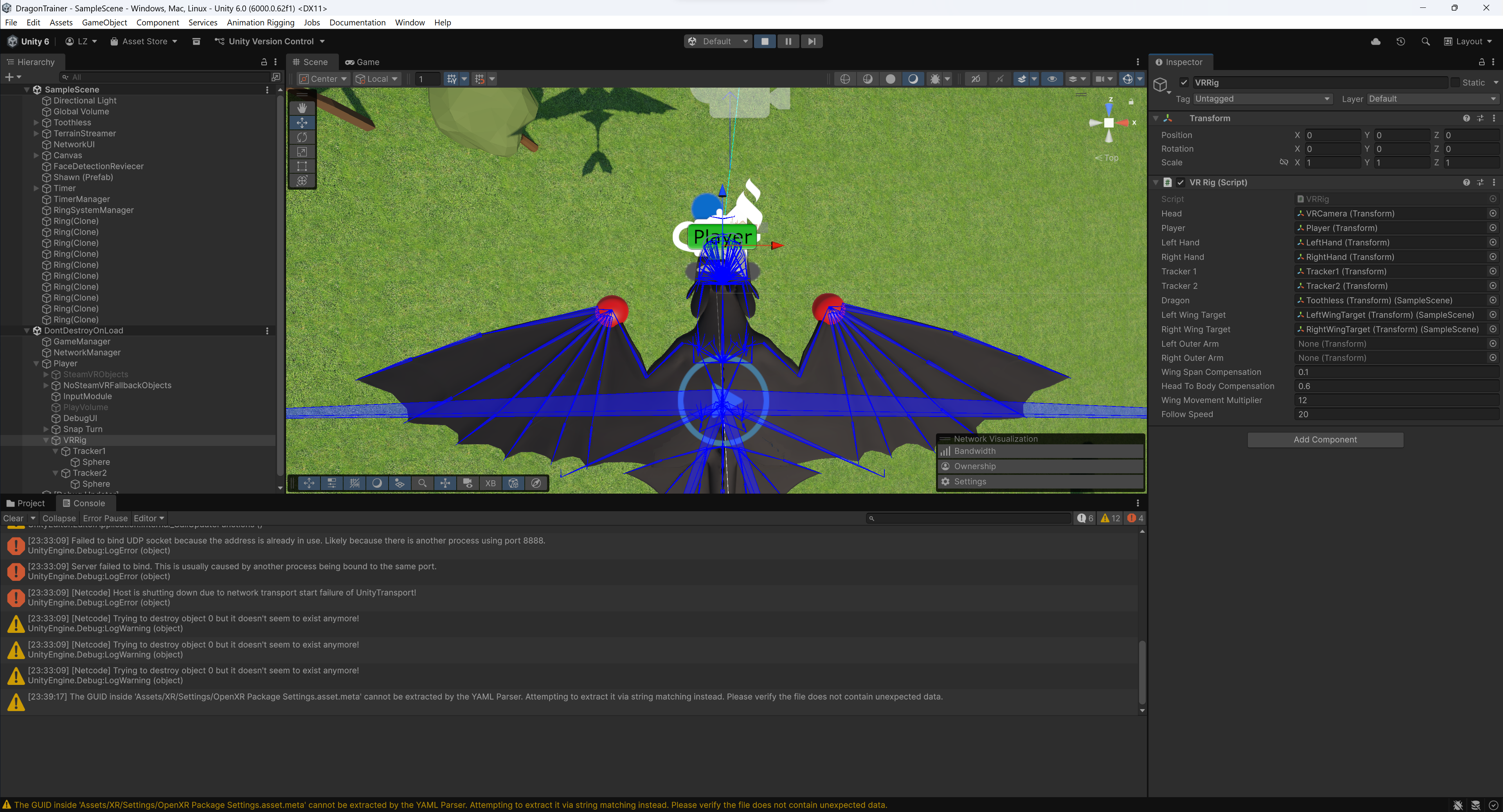

- VR interaction system: Integrated SteamVR and VIVE trackers for real-time flight control and wing animation mapping. The trackers read arm motion and fed it directly into the flight and animation systems.

- Dragon flight control: Worked on the custom physics-based flight control system that translates tracker input into realistic dragon movement, building on the airplane controller foundation set up by Rasmus.

- Procedural wing animation: Built wing animations using Unity’s animation framework that respond to the dragon’s physics state in real time.

- Multiplayer synchronization: Built the networking layer in Unity to keep all three instances (dragon, rider, and spectator camera) in sync, covering procedural animation and terrain generation across the sessions.

Technical Details

| System | Technology |

|---|---|

| Engine | Unity |

| VR Hardware | HTC VIVE + VIVE Trackers |

| VR Integration | SteamVR |

| Terrain | Chunk-based Perlin Noise generation + distance fog |

| Sheep behavior | Boid algorithm (cohesion, separation, predator avoidance) |

| Fire effects | Unity VFX Graph + Shader Graph |

| Hit effects | Dissolve shader |

| Mouth detection | OpenCV via UDP |

| Audio | Dynamic wind sound tied to flight speed |

Procedural terrain is generated in chunks as players move through the world using Perlin Noise sampling. Distance fog conceals the chunk boundaries, giving the world the feel of an infinite open sky.

Sheep herds use a Boid algorithm with separate force calculations for cohesion, separation, and predator avoidance, producing organic-looking flocking behavior that reacts when the dragon approaches or opens fire. Spawning and despawning are tied to the procedural terrain system.

Fire effects were first attempted using compute shaders but ultimately implemented with Unity’s VFX Graph and Shader Graph, which offered a better balance of visual quality and development speed. Sheep hit by fire trigger a dissolve shader that gradually erases them from the scene.

Mouth-triggered fire uses OpenCV running as a separate process over UDP. The dragon player opens their mouth to breathe fire, detected in real time via webcam.

Audio features dynamic wind sounds whose pitch and volume scale with flight speed, plus sound effects when flying through rings.

Networking was one of the harder technical challenges. Keeping dragon position, wing animation, and terrain generation in sync across three instances (dragon, rider, and spectator) required careful design of the network object model. Merging Unity scenes across branches also proved unexpectedly painful, and a lesson for future collaborative Unity projects.

Interaction Design

The project is heavily inspired by the How to Train Your Dragon movies — in particular, the relationship between Hiccup and Toothless, where neither can fly without the other. Hiccup controls the tail fin while Toothless provides the power; remove either and the flight falls apart. That dynamic was the thematic seed for the whole experience.

The core design challenge was making cooperation feel physically meaningful rather than just splitting a control scheme across two inputs. By anchoring roll to the shared tilting platform (both players must lean together) and mapping individual body actions (arm flapping, mouth opening) to distinct flight and combat inputs, the experience makes each player’s physicality matter. Neither can fully control the dragon alone.

The inspiration from Birdly was central here: the goal was always to make players feel like they were genuinely flying, not just pressing buttons. The full-body input (tilting, flapping, steering, and breathing fire) pushes toward that feeling even if the illusion is imperfect.

One acknowledged challenge is cybersickness. VR flight experiences are notoriously hard to tune; individual sensitivity varies widely, and the team learned that extensive playtesting is essential to find control parameters that feel good without inducing nausea.

A spectator camera provides a third-person view for observers, which added a performative quality to the experience during demonstrations.

Reflection

Dragon Trainer was one of the more technically ambitious projects I’ve been part of: VR hardware, real-time networking, procedural generation, computer vision, and a custom physical controller all in one. Getting those pieces to work together reliably under the time constraints of a course project required a lot of pragmatic decision-making: knowing when to cut scope, when to swap one approach for another (as the fire effect team did, moving from compute shaders to VFX Graph), and when to just make something good enough to test.

What still amazes me is that we pulled the entire system together in about a month. VR tracking, networked physics, procedural terrain, computer vision, and a physical tilting platform — each of those is a project on its own, and the fact that it all came together into a playable, coherent experience still feels like a small miracle. As our examiner generously put it, we “over-achieved in terms of pursuing a crazy project idea” and “somehow managed to make it not only work, but work really well.”

The cooperative constraint (neither player can fly alone) turned out to be the best design decision in the project. It forced strangers to communicate immediately and created a shared stake in the experience that carried the whole thing.

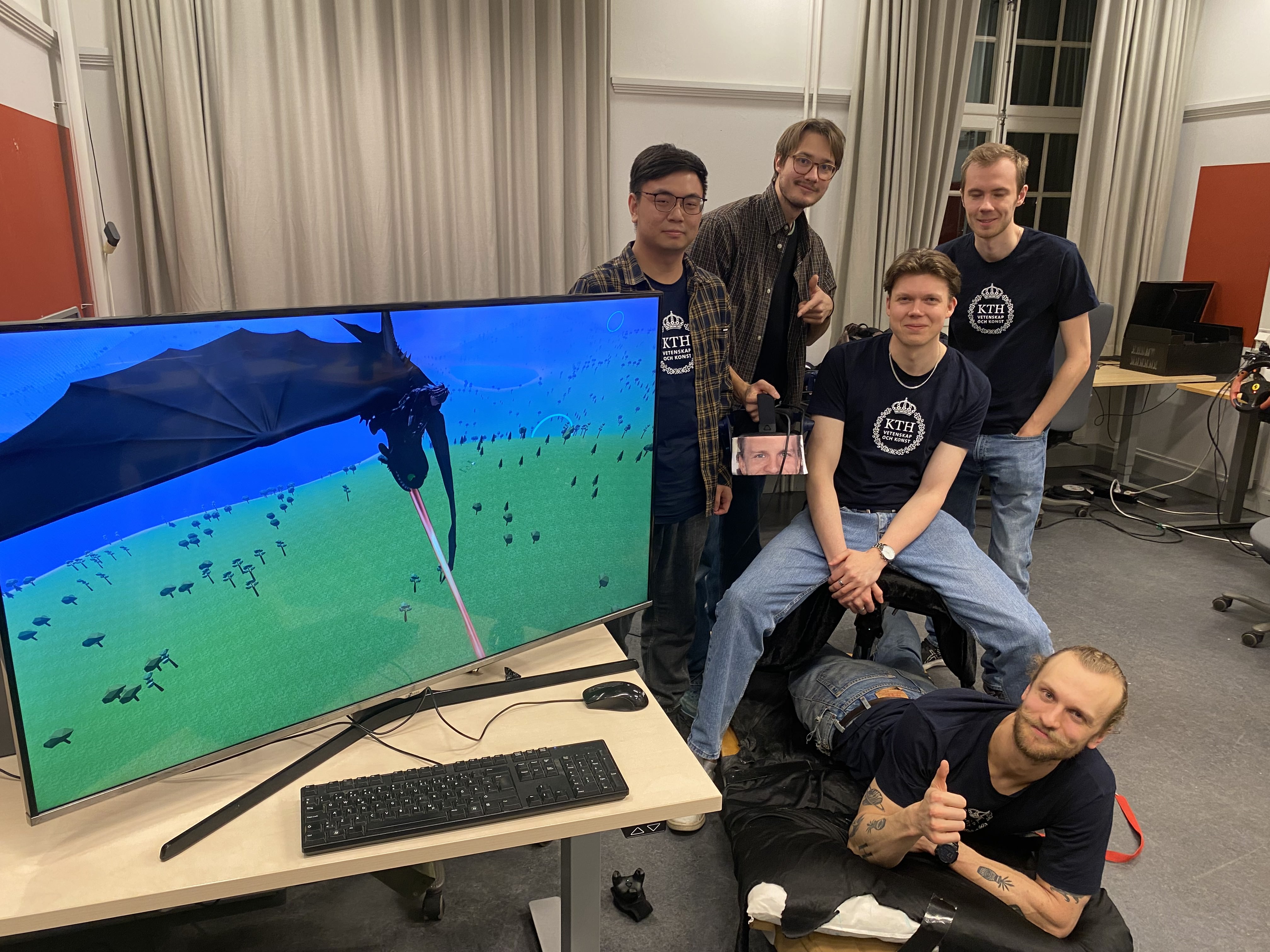

Thanks to Alvar, Markus, Oskar, and Rasmus for being great teammates throughout. Everyone specialised in something different and it made the project work in a way that a more homogeneous team probably couldn’t have. Building a VR dragon together is not something I’ll forget.

Gallery